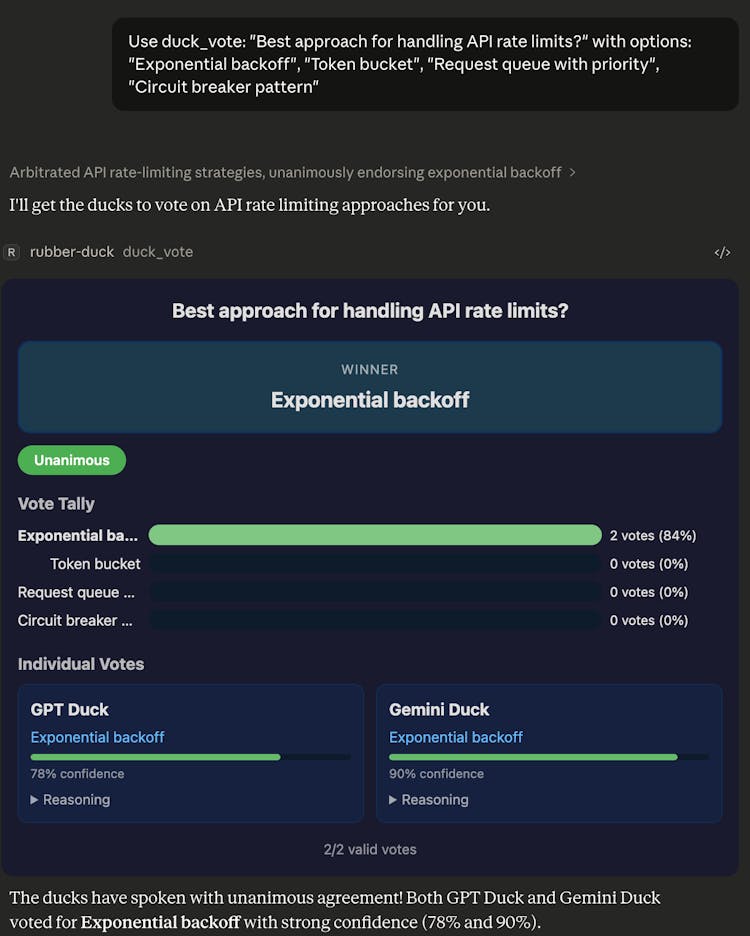

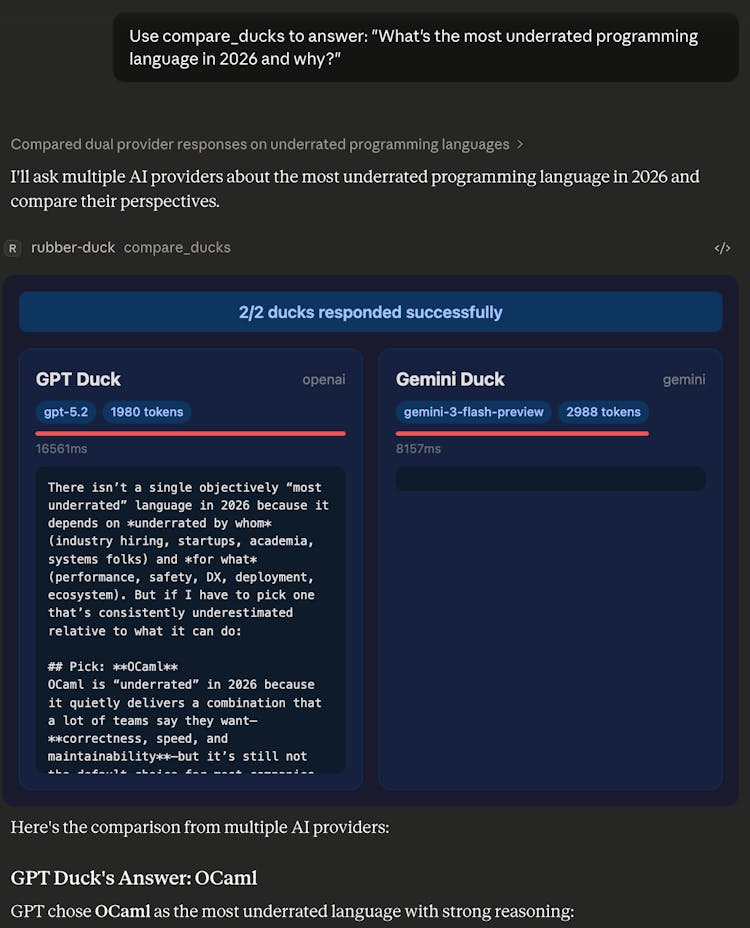

An MCP server that queries multiple LLMs in parallel from AI coding tools. Ask OpenAI, Gemini, Groq, or any OpenAI-compatible API the same question and compare answers side by side. Run consensus votes, structured debates, and judge evaluations. Works with Claude Desktop, Cursor, VS Code, and CLI agents like Claude Code, Codex, and Gemini CLI.

Classified in

Comments, support and feedback

About this launch

MCP Rubber Duck by Mike Will be launched July 13th 2027.